The companies combine their expertise to deliver intelligent, automated, and context-rich solutions while unlocking new growth potential.

Stuttgart and Wiesbaden, 3 November 2025 – Theobald Software, a leading in seamless SAP integration, today announces the acquisition of bluetelligence GmbH (“bluetelligence”), a software company specializing in SAP metadata management. Through this acquisition, the two companies are combining their SAP expertise and expanding their portfolio with capabilities for metadata management and data intelligence – driving greater efficiency, accuracy and deeper insights into SAP systems.

“The combination of Theobald Software and bluetelligence is a major step forward in providing our customers with even more comprehensive data transparency,” said Dr. Thomas Bruggner, CEO of Theobald Software. “bluetelligence’s technology is the perfect complement to our solutions. With the added dimension of metadata, our customers will be able to use, automate, document, and analyze SAP data more intelligently than ever before. This creates real value for businesses—especially in light of the growing demands driven by artificial intelligence.”

Torsten Schmidt, founder and former CEO of bluetelligence, also sees the partnership as a natural evolution: “Our expertise in SAP metadata management adds a crucial component to Theobald Software’s seamless SAP integration. Delivering the best possible customer experience in SAP environments has always been our focus, and the synergy of our software products makes that possible. Customers will benefit from a comprehensive portfolio and deeper insights based on decision-critical data.” Schmidt, the company’s founder and former CEO, will continue to play an active and advisory role going forward.

Looking Ahead Together

Theobald Software and bluetelligence share a common vision: to continuously advance their technologies and help businesses address the challenges of digital transformation within the SAP ecosystem. Their focus lies on ensuring optimal data availability and usability—the foundation for data-driven decisions and the successful application of artificial intelligence. By combining their expertise, both companies are laying the groundwork for sustainable growth and reinforcing their technology leadership in the SAP domain. Customers will benefit from practical, innovative solutions that measurably enhance transparency, efficiency, and data intelligence.

About Theobald Software

Founded in 2004 in Stuttgart, Theobald Software is a global leader in seamless SAP integration. The company’s solutions enable the use of SAP data in virtually any third-party system—on-premises or in the cloud. Over 1,700 active customers worldwide across all industries and company sizes rely on Theobald Software’s expertise to make efficient and strategic use of their SAP data. With offices in Europe and the United States, around 60 highly qualified professionals support numerous large enterprises and mid-sized companies, including the majority of Germany’s DAX-listed corporations. Partnering with industry leaders such as SAP and Microsoft, Theobald Software is dedicated to continuously developing optimal integration solutions.

About bluetelligence

Founded in 2008 in Wiesbaden, bluetelligence develops market-leading software for documenting, analyzing, migrating, translating, and cataloguing SAP metadata. Guided by the motto “Work smarter, not harder,” its automated solutions make everyday work easier for more than 200 customers across Europe in the field of Business Intelligence.

Press Contact Theobald Software GmbH

Benjamin Föll

Head of Global Marketing & Communications

E-mail: benjamin.foell@theobald-software.com

Press Contact bluetelligence GmbH

Charlotte Fiox

Marketing Manager

E-Mail: charlotte.fiox@bluetelligence.de

Press Contact Maisberger GmbH

Anja Söldner / Susanne Leisten

E-mail: theobald-software@maisberger.com

In today’s data-driven world, effective data cataloging is essential to efficiently manage, understand and make the best use of data. And especially in companies with complex SAP landscapes, Data Cataloging is becoming increasingly important to ensure data quality, meet regulatory requirements and facilitate data-driven decisions. This article compares the three main options available to SAP-centric BI landscapes: SAP’s Datasphere Catalog, the Collibra Data Intelligence Cloud and bluetelligence’s Enterprise Glossary.

Why Data Cataloging is important in the SAP Environment:

SAP systems are the backbone of many companies and contain an enormous amount of business-critical data. Without structured Data Cataloging, the following challenges often arise:

Data retrieval: Where is the required data located?

Understanding data: What do the data fields mean and how are they related?

Data quality: Is the data reliable and up-to-date?

Compliance: Are regulatory requirements being met?

A Data Cataloging tool helps to solve these problems by offering a central overview of all relevant data resources and providing contextual information. This makes collaboration between business users, IT teams and data experts much easier.

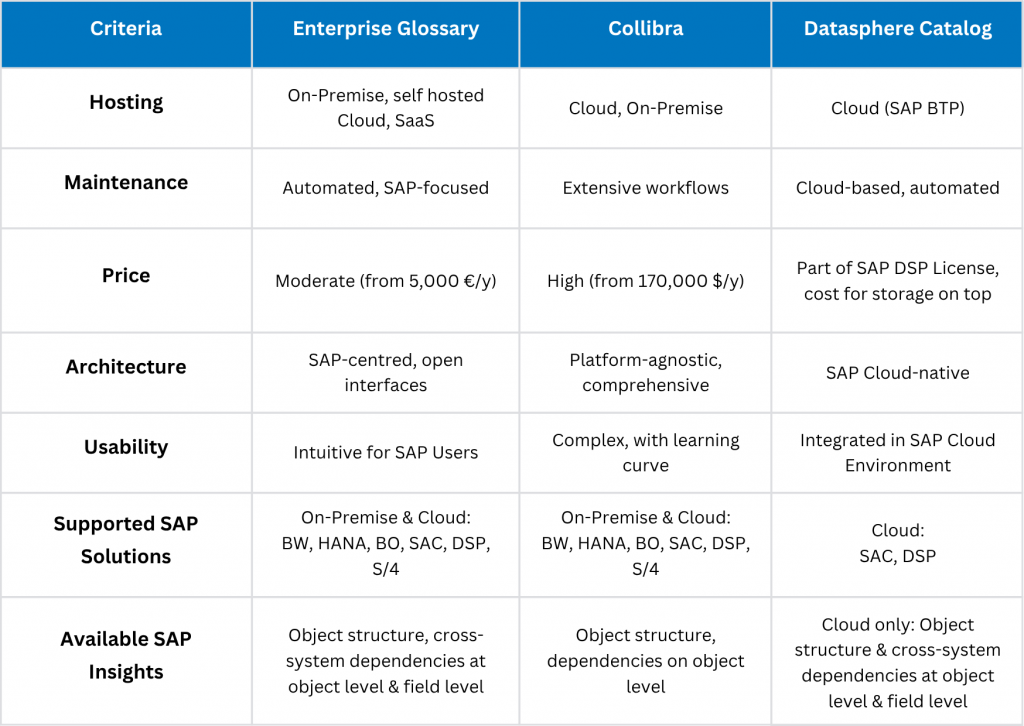

There are three prominent cataloging tools for SAP-dominated BIs: The Enterprise Glossary, the Collibra Data Intelligence Cloud and the SAP Datasphere Catalog. In this article, we compare the solutions – in categories such as price, setup, maintenance, provision and range of functions. Even though we ourselves are providers of the Enterprise Glossary, we have endeavored to provide a neutral observation – if you would like to find out more on the topic, you can find another comparison from the consulting firm infomotion here.

The Candidates at a Glance: DSP, Collibra and Enterprise Glossary

Enterprise Glossary (bluetelligence)

The Enterprise Glossary is a specialized solution for SAP environments that provides a comprehensive semantic glossary. It combines business terms with technical metadata from SAP systems and is characterized by its easy usage and direct integration into the SAP world.

Collibra Data Intelligence Cloud

Collibra is a leading enterprise data intelligence platform with comprehensive functions for Data Governance, Data Quality and Data Cataloging. The solution is aimed at companies with complex, heterogeneous data landscapes and offers broad scalability.

Datasphere Catalog (SAP)

The Datasphere Catalog is integrated into SAP Datasphere (formerly Data Warehouse Cloud) and is primarily used to manage and structure data in the SAP cloud environment. This approach is particularly interesting for companies that already rely heavily on SAP cloud solutions.

Detailed Comparison of the Data Catalogs

1. Price

The Enterprise Glossary offers an attractive price-performance ratio from €5,000 per year, including an unlimited number of reading users. Collibra, on the other hand, is in the premium price segment with costs starting at $170,000 per year, which makes it more attractive for corporations. The Datasphere Catalog is part of SAP Datasphere licensing, which means that companies that already use SAP Cloud also have access to the tool – however, the price of the license increases depending on the required storage and integration of the metadata.

2. Installation / Set-Up

The Enterprise Glossary can be implemented within two days and synchronizes automatically with SAP systems. The use of Collibra requires a comprehensive setup, which takes more time and requires training. The Datasphere Catalog is integrated into SAP Datasphere and is therefore easily accessible for existing SAP cloud customers.

3. Maintenance

The Enterprise Glossary scores with an automated glossary and predefined templates that minimize the maintenance effort. Collibra also offers automation, however the complexity of the platform means that users usually require more time to familiarize themselves with it. The Datasphere Catalog is cloud-based and largely automated, but functionally limited to the SAP Cloud.

4. Hosting

The Enterprise Glossary is flexible and can be hosted both on-premise and in the cloud. Furthermore, bluetelligence also offers a SaaS version. Collibra also offers on-premise and cloud options, but is not SAP-centric. The Datasphere Catalog is only available as a cloud solution in SAP BTP.

5. Usability

The Enterprise Glossary is particularly intuitive for business users and SAP users and offers a fast learning curve. Collibra offers a more comprehensive platform, but requires considerably more training. The Datasphere Catalog is easy to use for companies with existing SAP cloud structures.

6. & 7. Supported SAP Solutions and Available SAP Insights

The Enterprise Glossary focuses on SAP-specific Data Cataloging and the management of metadata – this focus is therefore also reflected in the higher degree of information of the metadata. Despite the strategic partnership with SAP, Collibra is a broad-based data governance platform that goes far beyond SAP and therefore offers comparatively less in terms of the depth of detail of SAP information. The Datasphere Catalog primarily supports SAP cloud users with basic cataloging functions and therefore offers fewer options for companies whose hybrid structure also relies on SAP BW.

While the Datasphere Catalog is limited to cloud objects (SAP Analytics Cloud and SAP Datasphere), Collibra and the Enterprise Glossary cover all common SAP Data & Analytics connectors and objects (SAP BW, SAP HANA, SAP Business Objects, SAP Analytics Cloud, Datasphere & S/4).

Furthermore, the three catalogs differ in the information content of the SAP insights they provide: While the DSP can display the detailed structure as well as relationships and field mapping of cloud objects, Collibra provides less detailed insights (available: structure at object level (measures, dimensions), not at field level, no cross-system relationships, only simple where-used list), for common data & analytics objects – this is achieved primarily through the partnership with the British company Silwood Technology. The Enterprise Glossary provides detailed insights into cross-system dependencies of all common SAP Data & Analytics objects at object level (and soon also at field level).

Conclusion: Which Tool is the Right Fit For Me?

The choice of the right Data Cataloging tool depends on the individual requirements regarding the BI landscape and objectives:

Enterprise Glossary: Ideal for companies with SAP-centric data that are looking for a cross-system, fast and cost-effective solution and want to document & analyze holistically (lineage down to field level, system integration). The solution can also add value as a co-existing metadata glossary alongside existing Data Catalogs. In addition to SAP, the Enterprise Glossary also offers Microsoft Power BI connectivity.

Collibra: Perfect for companies with heterogeneous data landscapes and high data governance requirements. The focus is less on in-depth SAP insights and more on broad connectivity and governance/compliance.

Datasphere Catalog: Particularly suitable for companies that already use SAP cloud solutions and require seamless integration. The catch: Those whose data is still predominantly rooted in SAP BW will not receive any information about data sources, lineage etc. here.

If you focus on SAP cloud technology, DSP Catalog and Collibra are useful tools – as long as you can live with limited transparency when it comes to the system landscape.

Anyone who’s looking to document and analyze holistically – incl. BW, BO, HANA, SAC & Co. will require field lineage and system integration.

💡 By the way: We have extracted the technology that the Enterprise Glossary uses to automatically extract deep metadata insights and offer it for other Data Catalogs as well: With our Metadata API. The API has already been successfully integrated into the Data Catalogs of our partners dataspot., synabi, zeenea and Alex Solutions, where it provides the necessary SAP insights that our partners prepare in a comprehensible, visualized form.

Got Curious?

Find out if our candidate, the Enterprise Glossary, suits your requirements: You can get an impression of our tool and its use cases on our website. There, you will also find the opportunity to test the Enterprise Glossary as part of a 6-month PoC or to contact us for more information.

Best Practice Example: Our customer, an international retail company, uses the Enterprise Glossary to optimize Data Cataloging in its SAP landscape. The direct integration enabled the company to merge specialist terms and technical metadata, resulting in more efficient collaboration between IT and specialist departments (BI Self-Service). Within a few weeks, data discovery time was reduced by 40% and compliance with regulatory requirements was significantly improved.

The more complex the SAP data flow, the less motivation there is for technical documentation. This blog article discusses the potential of technical SAP documentation, the risks of neglecting it and shows a practical approach on how to efficiently document SAP data flows across systems: With automation through SAP AddOn tools.

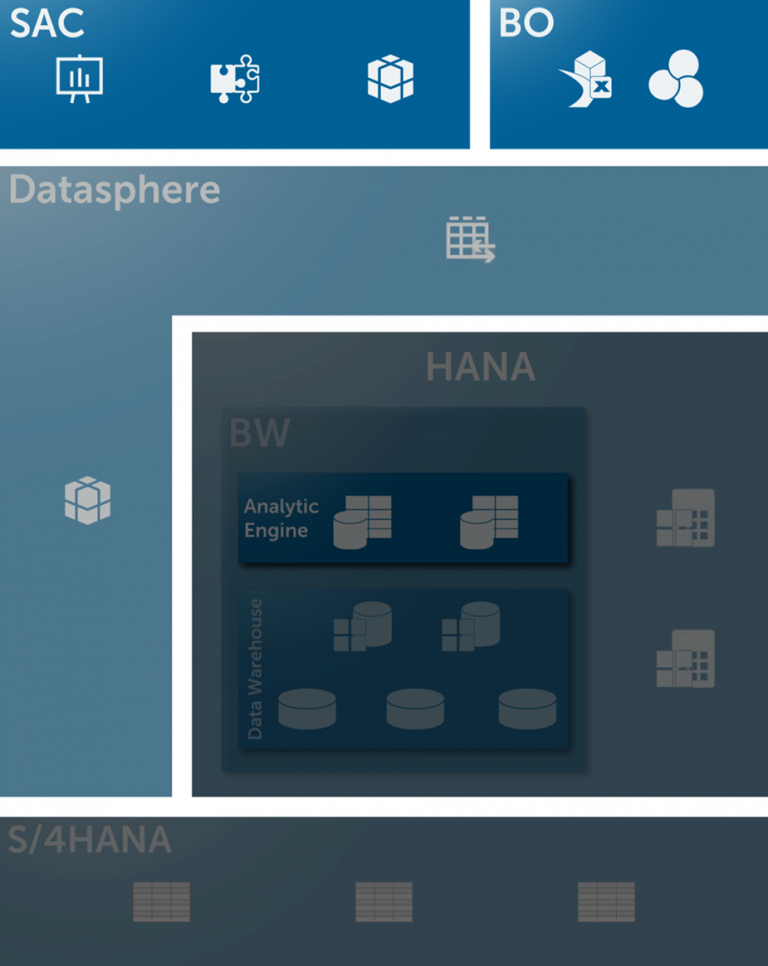

Complex SAP Data & Analytics Structures Complicate Documentation

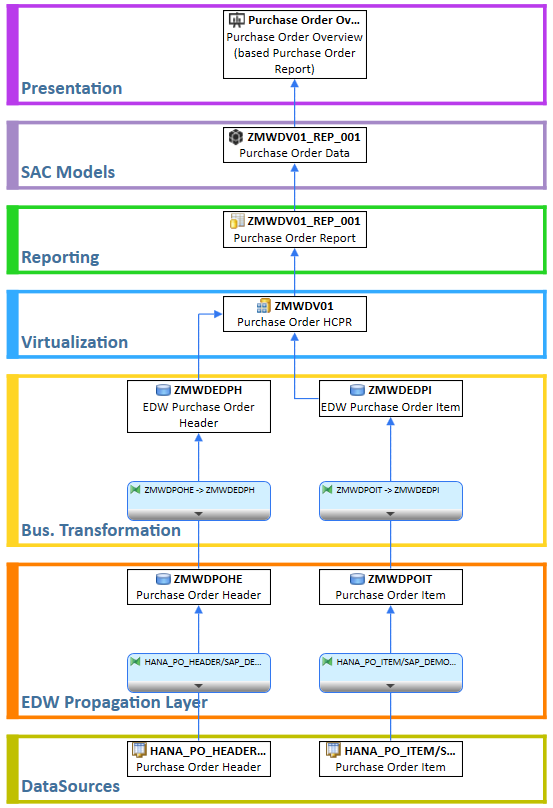

SAC Stories rely on Datasphere Views, which in turn rely on Calculation Views in HANA or tables in S/4HANA, BW Queries, InfoProviders, DataSources… – modern SAP Data & Analytics architectures combine a variety of systems and technologies.

As complexity increases, the motivation and capacity to document a long and ramified data flow usually decreases – plus: New requirements take priority anyways and are simply implemented directly in the system. This practice, however, is not without risks – whether in terms of quality, compliance or development speed. And: It also wastes potential. The list of use cases in which technical SAP documentation is advantageous is long:

Advantages of Technical SAP Documentation

- Fast knowledge transfer when new team members are onboarding or projects are handed over by external/internal staff

- System and process analyses by mapping data origin, data transformation and data lineage

- Accelerated search for errors in dashboards and debugging

- Thorough change management / impact analyses

- Audits & compliance requirements (proof of data flows, responsibilities, transformation logic, etc.)

- Redesigns & system migrations (simplified analysis of legacy systems, optimal setup of structured target architecture, e.g. for BW on HANA → BW/4 or Datasphere migration)

- Reusability & standardization (documented applications serve as templates for new developments)

Game Changer: Automated SAP Documentation

What many SAP developers don’t know: Thanks to automated SAP add-on tools, it has long been possible to benefit from the advantages of SAP documentation without wasting time. Integrated via function modules, Docu Performer documents entire data flows across systems at the touch of a button.

The SAP documentation tool offers the following possibilities:

- Automated documentation of entire data flows or individual objects as well as any comments created for them

- Export of a structured, searchable document with a directory (Word, Excel, PowerPoint, PDF or HTML) and optional upload to Confluence

- Supported technologies for documentation: SAP BW – SAP BW/4HANA – SAP Business Objects – SAP Analytics Cloud – SAP Datasphere – SAP ECC – SAP S/4HANA

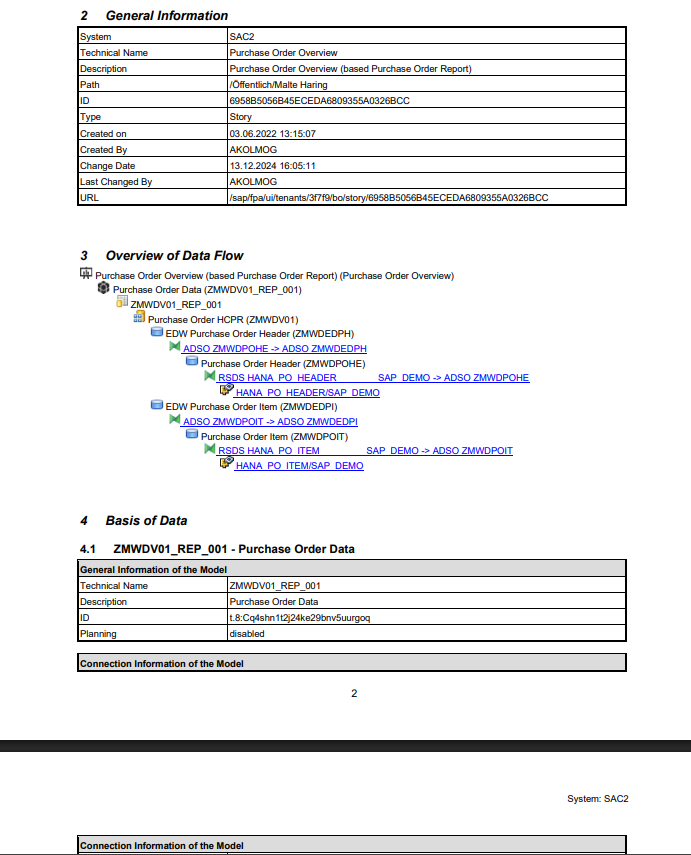

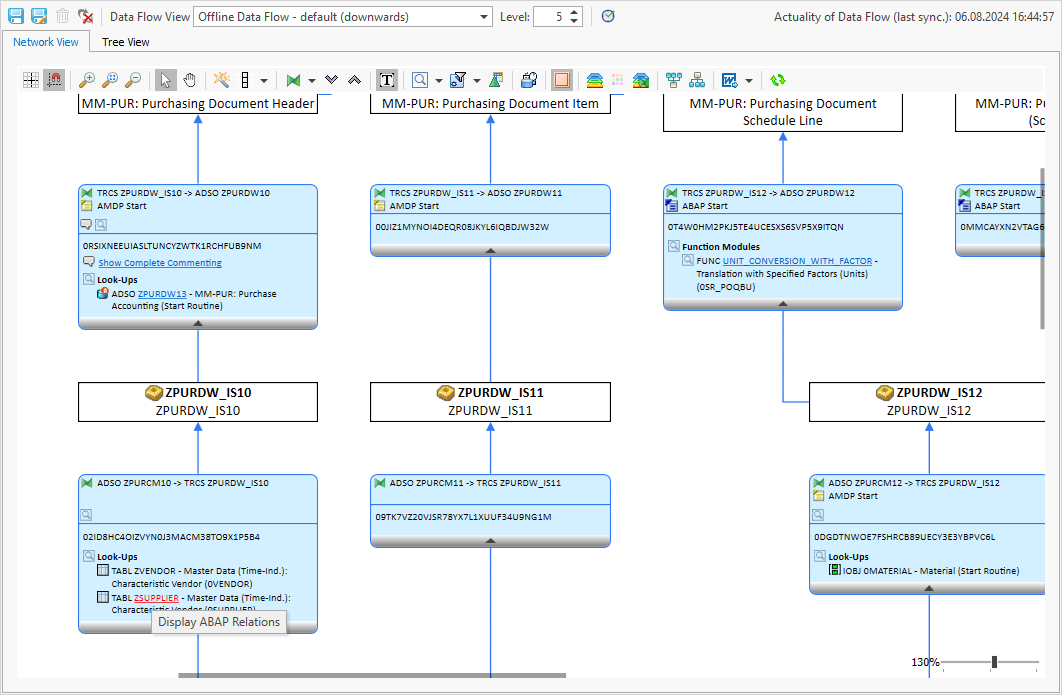

Here is an example of how to document data flow of a SAC story from a purchasing dashboard:

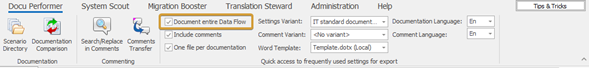

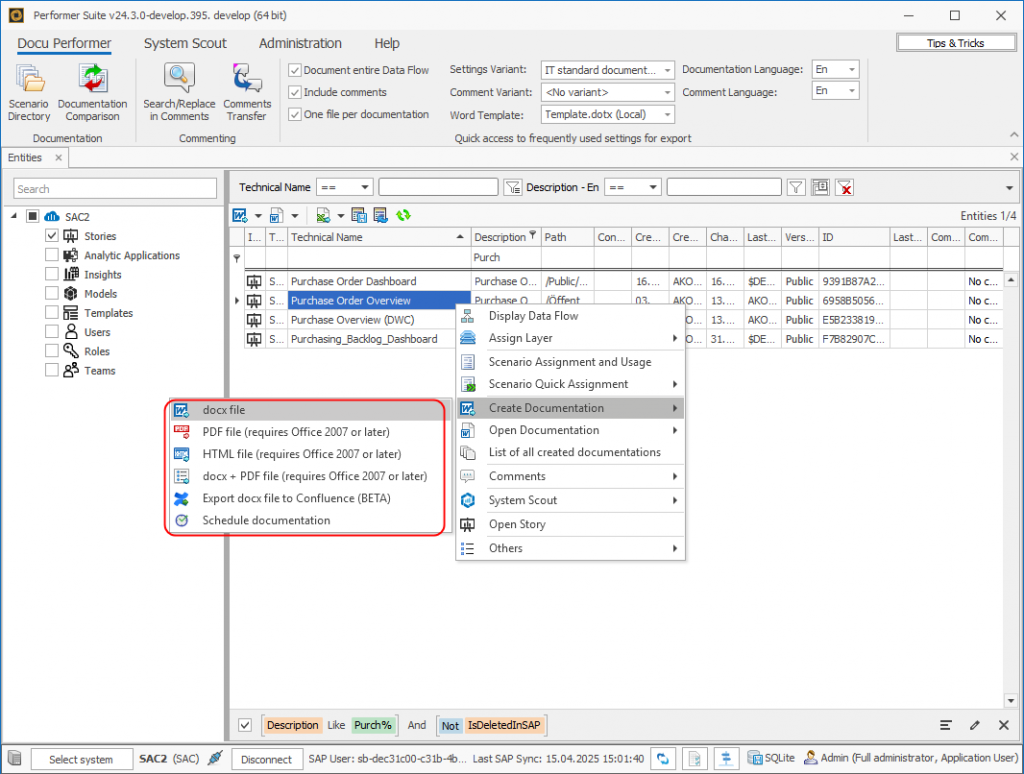

Object Selection and Variants:

In the settings, you can first define whether individual or all objects of the story should be documented (“Document Entire Dataflow”, available with version 24.2) and whether existing comments on the objects should also be documented. You can also specify the level of detail and the language of the documentation to suit different target groups.

Export:

As export variants, you can select a desired document format (Word, Excel, PowerPoint, PDF, HTML) and specify whether the document should be uploaded centrally available to Confluence.

Final Document:

The result is a detailed documentation in which all objects of the data flow are documented – searchable and clearly structured. Here you can see the information content of the SAP documentation for yourself in the exported PDF.

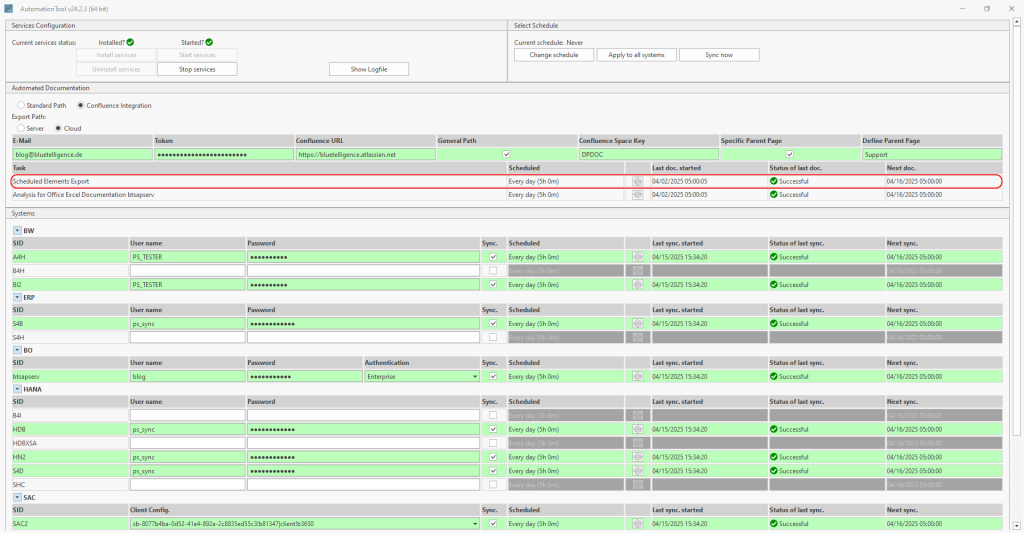

Schedulability:

Another exciting feature is automated updating. Documentation can be automated using scheduled jobs so that up-to-date information is available on a daily basis, for example.

Conclusion: SAP Documentation Must Grow with the Complexity of the System

Manual documentation is a thing of the past – in modern SAP Data & Analytics architectures, automated documentation is not a luxury, but a decisive factor for efficiency, quality and scalability. Those who rely on smart solutions at an early stage create a resilient basis for agile further development – without having to constantly start from scratch.

You can easily try out how the automated documentation of your own data flows feels in practice: With a free trial version via our website.

Self-service, data democracy and tools such as SAC or Datasphere have long been an integral part of the business intelligence bubble. The demand for a self-service BI is high – and has been for a long time: As early as 2016, 80% of the companies surveyed in a BARC study stated that they were already using or planning to use self-service BI tools. We have since seen a strong business orientation of data & analytics tools and a change in the role of business users, who are now expected to shoulder much more responsibility for creating reports and dashboards independently – without the support of IT.

This paradigm shift is certainly a positive one – after all, efficient processes and data-driven decisions are crucial. However, especially with regard to SAP BI tools, the theory of self-service BI is still lagging behind practice. Observations often show that business users are still far from empowered and continue to rely on information and assistance from IT.

This article discusses which obstacles are responsible for this, why they arise and how the path to a genuine data democracy can be achieved. Let us tell you this much in advance: Metadata is the key.

Status Quo: SAP & Self-Service BI

In the context of SAP Data & Analytics, the concept of self-service BI can be broken down into two components: Modeling capabilities and (meta)data transparency.

SAP Analytics Cloud (SAC) and Datasphere enable business users to carry out modeling independently – thanks to an intuitive user interface and low-code approaches. IT skills are no longer absolutely necessary to create interactive dashboards, visualize data and perform ad-hoc analyses. At the same time, the Space concept offers the perfect opportunity to create isolated content areas under the sole responsibility of the business departments.

With regard to data transparency, SAP already offers a number of tools in these technologies that support BI self-service – especially in these areas:

- The graphical representation of data models

- The integrated Data Catalog

- The strategic cooperation with Collibra

Of course, it should also be mentioned at this point that it remains to be seen how the announcements regarding the Business Data Cloud will affect these two self-service components in the future.

So much for the theory – in practice, the above-mentioned data transparency reaches its limits when a very common situation arises: In most companies, in addition to modern tools, older SAP solutions are also part of the architecture. Specifically, we are talking about the continued use of one or more SAP BW systems in almost all large companies. In most cases, these have grown over years and decades and are highly complex. Perhaps HANA Calculation Views or CDS Views that are consumed by SAC stories have also been tried out in the past. And then there may be countless AfO reports in the front end that the business department cannot part with. And, not just to talk about SAP: Power BI, of course, is also popular and widely used.

We can strongly assume that this hybrid approach of old and new technologies will continue to be used by most companies for quite some time still – regardless of the newly announced possibility of lifting the BW system into the BDC as a private cloud edition.

Limits of a Self-Service BI

So much for the framework conditions. Now, if a business user is “let loose” on this architecture and is supposed to actually carry out the BI self-service idea, he is faced with lots of layers below the front-end tools SAC, BO and Co. Without in-depth IT knowledge and access to them, the SAP architecture resembles a Black Box for him.

One can already guess that the promising approach of self-service BI, according to which specialist departments are able to search data and create reports without in-depth technical knowledge, is being undermined by the framework conditions of the non-transparent reality.

In discussions with our customers, we were able to work out what information business users are looking for and thus identify the following key questions that continue to be asked of IT, given the conditions:

- Which reports or dashboards are available for my topic? Who is responsible?

- How are these reports structured technically? Which filters and data sources are used?

- How is my key figure calculated? What is the formula behind it?

- What has changed technically in my report since yesterday?

The sobering realization is that the use of SAP tools with self-service BI functionalities alone is not enough to establish actual self-service BI in the company. The tools do not provide business users with enough information on everyday issues and continue to tie them to IT. Alternatively, business users might not ask at all—instead of searching for existing reports, they simply create new ones, contributing to a growing graveyard of unused dashboards, views, and tables.

You can guess what’s coming next since we have already addressed the solution to this problem in the introduction:

Metadata as the Key to a Functioning Self-Service BI

Now, this is where the main character enters the stage – the metadata, of course.

Why are they so important? Because they contain information about the data & data sources, about relationships and provide valuable contextual information. In short, metadata makes data understandable and findable. Without it, the key to using data effectively is missing.

Unfortunately, this metadata is not served to business users (and developers) on a platter – it has to be found with considerable effort, prepared and then made accessible. This is precisely the challenge we at bluetelligence have been tackling for over 15 years of software development: We search the backend tables of SAP applications, read the metadata and prepare it in a way that it is understandable for everyone.

The question that arises now is of course: How can this valuable information be made available to business users? And this is where data catalogs come into play.

A company-wide data catalog serves as a central knowledge base for metadata and data. It enables companies to provide information in a structured way and make it accessible to a wide range of users. This makes it the basis for genuine data democratization and supports sustainable data management.

In the following section, we look at how a data catalog can help with the challenges we have outlined above:

Basic Requirements of a Data Catalog:

- Simple and centralized access for all business users, e.g. via browser

- User-friendly in terms of UX and UI

- Automatic updating of technical metadata – best case, scheduled via jobs

- Support of common BI solutions (SAP, PowerBI, etc.)

- Easy searchability, ideally with filter options

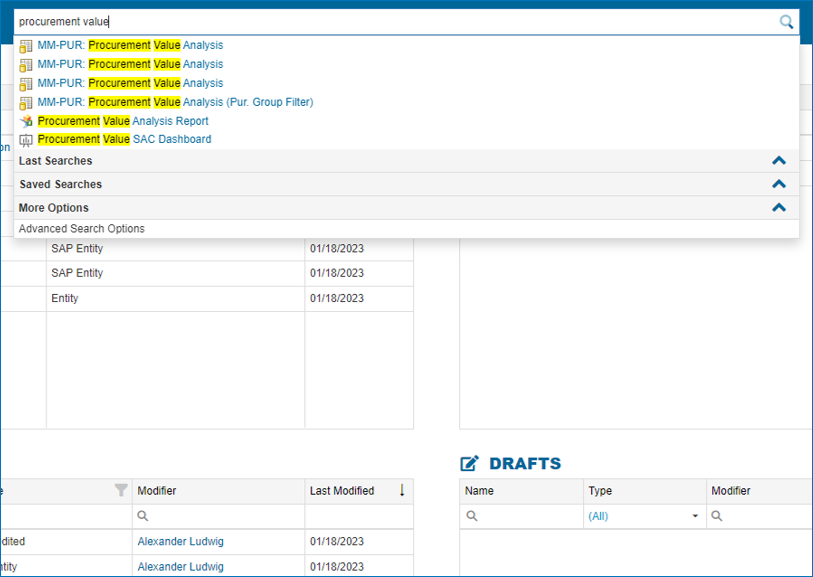

- Can be called from SAP applications (e.g. in SAC Story)

Find suitable reports/dashboards:

- based on technical name

- based on a source object (e.g. BW Query, Analytic Model…), essentially “Where-Used”

Display the context of reports/dashboards:

- Display the technical structure of all reports/dashboards in an understandable way – e.g. check filters at a glance

- Offer the possibility of documentation beyond technical details – e.g. technical additions or responsibilities – this improves the understanding of data and the possible uses for different user groups

Understand Key Figures

- Display formula of calculated key figures

- Graphical view of the relationships (so-called driver tree)

Data Lineage:

- Identify data sources quickly with the help of graphical representations

A tour through the possibilities of our Data Catalog “Enterprise Glossary”

You are currently viewing a placeholder content from YouTube. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

More InformationKey Take Aways

A data catalog that contains an overview of the relevant metadata (data sources, where-used, key figure correlations) and presents it in a way that is understandable for business users is the key to an actual self-service BI.

This empowers and relieves the right people:

- Business users who know about context are capable of taking actions and making well-founded decisions – after all, business users will be expected to have precisely this skill set in the future.

- And IT? Ideally, the elimination of numerous tickets will give them more time for strategic development, innovative data solutions and a sustainable BI architecture.

If you would like to try out our Data Catalog, the “Enterprise Glossary” and the insights it contains on metadata from SAP BW, BW/4, S/4, SAC, HANA and Datasphere as well as Microsoft Power BI for yourself, you can do so easily and free of charge by sending a short demo request via our website.

Introduction

Anyone who has ever been involved in an SAP BW migration project knows that the larger and more complex the system, the more important it is to be methodical and standardized as much as possible. Migrating from SAP BW to SAP Datasphere also confronts companies with the challenge of switching from their familiar on-premise solution to a public cloud environment. This transformation process requires not only careful planning, but also a clear vision of how to optimally leverage the advantages of the new cloud technology while preserving proven business logic.

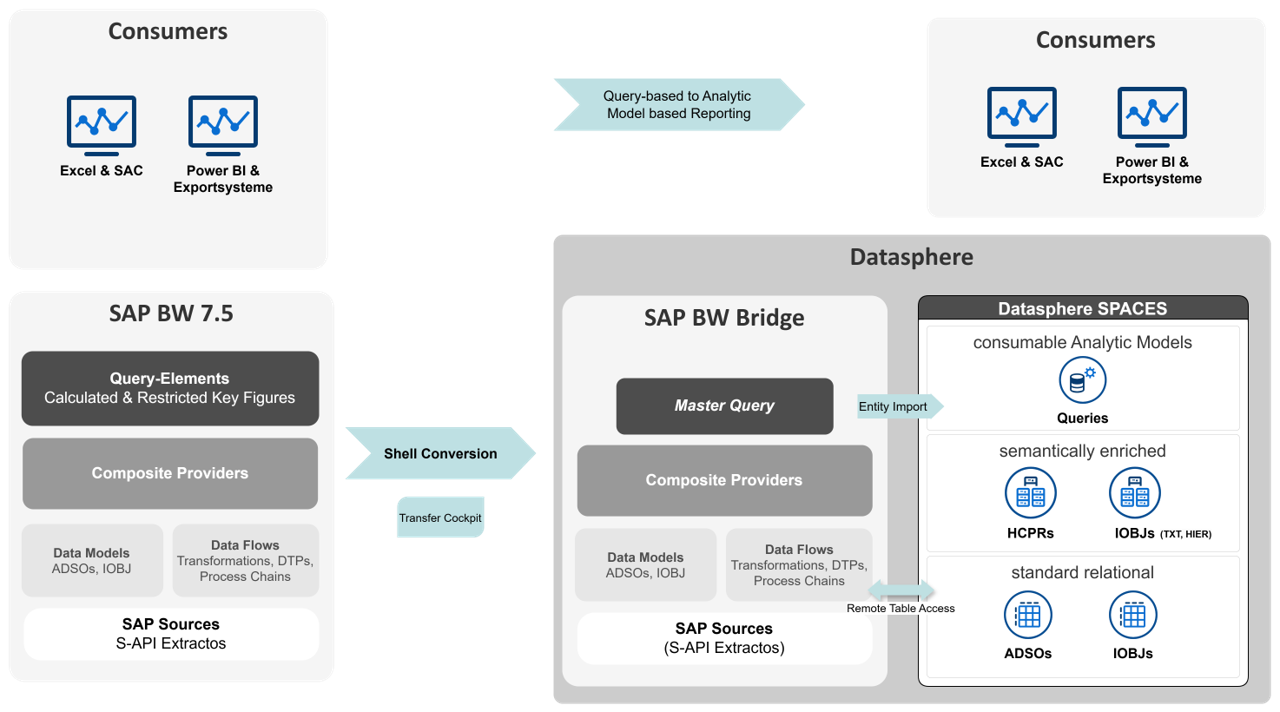

SAP BW Bridge is designed for the latter aspect – it acts as a link between the systems and enables the migration of existing models and business logic. In this joint blog post by NextLytics and bluetelligence, we will use a practical example from a project to show how this approach can be successfully implemented. After all, using the bridge naturally means partially foregoing the advantages promised by a greenfield approach in Datasphere. In contrast, as a “faster” migration option, it offers many advantages that are worth considering. The following reasons encourage the use of BW Bridge:

- Investment in BW models: Often, a lot of effort has been expended in the development of specific BW models that contain complex business logic and customized data flows – sometimes with extensive ABAP logic.

- Limited availability of CDS-based extractors: In cases where SAP-based source systems do not yet provide the required CDS extractors (e.g. SAP ECC systems), classic S-API extractors must be used. However, it is not recommended to connect these directly to SAP Datasphere, as there are limitations, for example, when DataSources require mandatory selection fields or certain delta methods are not supported.

- Lack of specific skills: Often, teams have strong BW and ABAP expertise, but not enough knowledge of SQL or Python, which are used in SAP Datasphere, to rebuild the models natively.

All of these factors applied in the following real-life project example. It involved an extensive, historically grown BW system of an energy supplier with highly specialized models that take into account the particularities of the complex and highly regulated energy market. The project objective was to retain the existing models, business logics and data flows and merely map the reporting layer in SAP Datasphere. In this context, the use of the Performer Suite from bluetelligence proved to be a decisive success factor for an efficient and targeted migration.

Vision and Approach

Before we present the detailed objective of the project, it is important to explain a special feature of BW Bridge in connection with SAP Datasphere. Although queries can be transferred to the BW Bridge during migration, they cannot be executed as in the classic BW system or used as a source for other data targets. Instead, queries are provided in the BW Bridge as metadata. This means that they can be imported and used as a basis for the entity import into SAP Datasphere. This feature plays a central role in our approach.

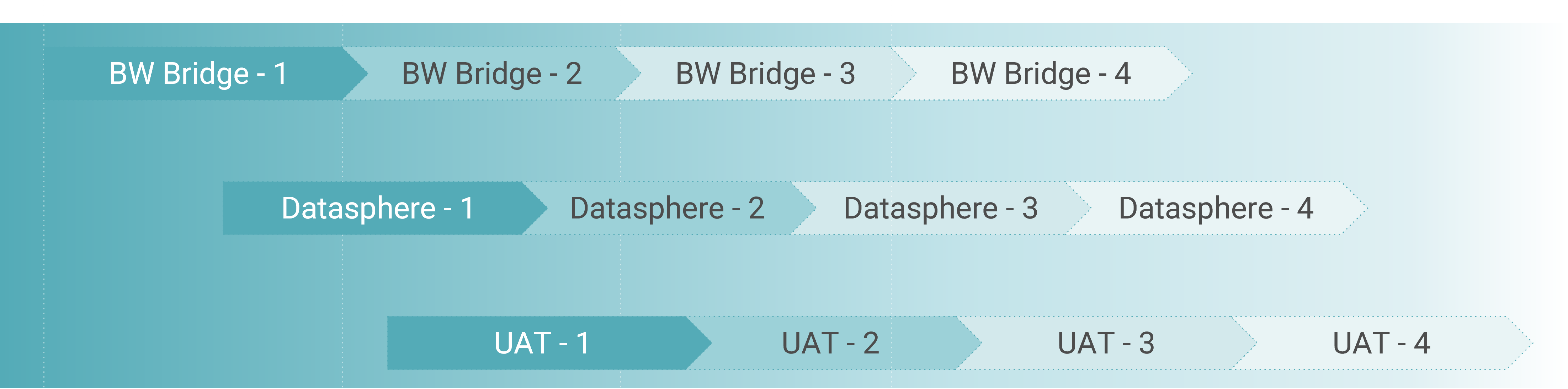

The following diagram illustrates the project’s main objective:

The project defined several key steps that were crucial for the migration from SAP BW to SAP Datasphere:

1.Tool-supported transfer via Shell Conversion:

- Migration of models and data flows up to the composite provider

2. Development and transfer of master queries:

- Mapping of all global and local calculated and restricted key figures as well as all characteristics relevant for reporting

- Creation per composite provider

- Transfer using Shell Conversion

3. Generation of Analytic Models:

- Entity import of the master queries

- automatic creation of the analytics models based on imported queries

4. Conversion of reporting:

- conversion of query-based reporting to analytics models in the various front-end tools

By strategically using entity import, we were able to automatically generate a large number of the required objects and thus significantly reduce the time required for modeling in Datasphere.

The illustration in the diagram is intended to be simplified and focuses on the core aspects of the migration. The complete concept includes additional important components:

- Further non-SAP source systems

- Detailed layer concept

- SPACE concept for the collaboration between central IT and specialist departments

- Implementation of authorizations via data access controls

- Consideration of specific requirements depending on the data recipient (e.g. via ODBC or OData)

For detailed information, please feel free to take a look at our partner’s article on the reference architecture SAP Datasphere: Blog Nextlytics: Datasphere Reference Architecture – Overview & Outlook

Practical Use of the Performer Suite in the Project

Master Query Analysis

One of the key challenges of the project was to create the key figure definitions as templates for the master queries – to do this, we had to analyze the structures of a large number of queries from the customer’s BW system. Even in the planning phase, it was clear to us that we would need a powerful, tool-supported solution for this. As a partner of bluetelligence, we knew the strengths of the Performer Suite and therefore decided to use a temporary license in the project.

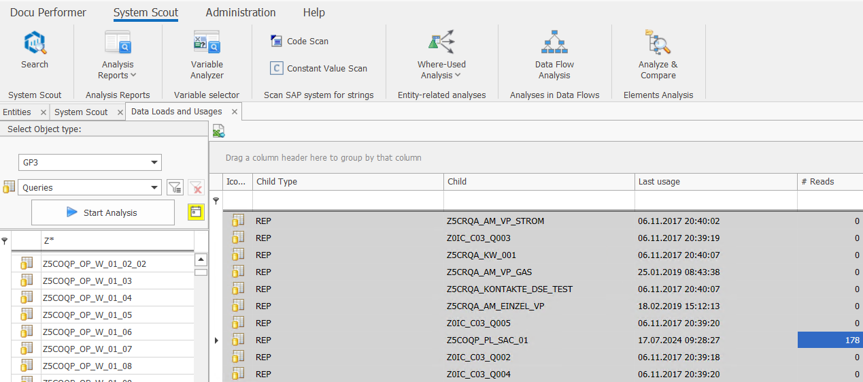

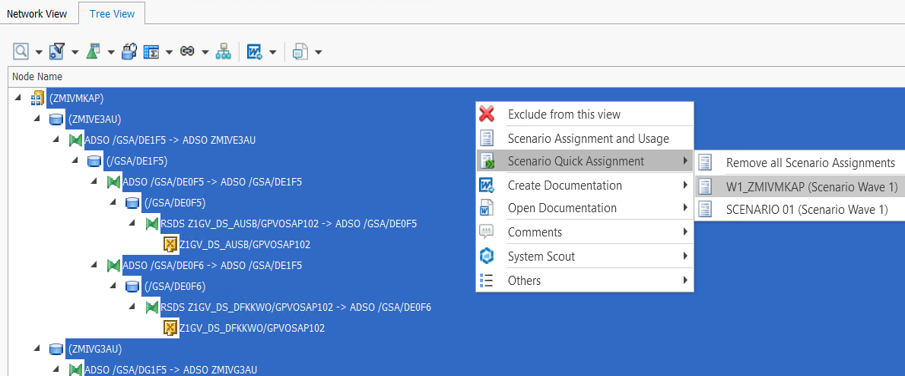

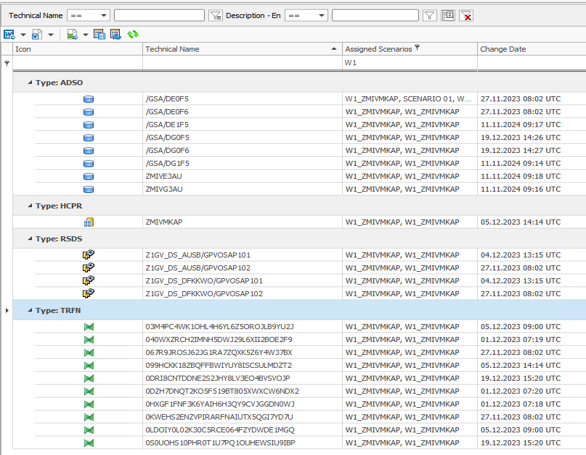

The goal was to create a master query for each composite provider that contains all global and local calculated and restricted key figures as well as all characteristics relevant for reporting. In order to only take over the key figures relevant for reporting, we first analyzed all queries executed in the last 18 months using the System Scout analysis Data Loads and Usages:

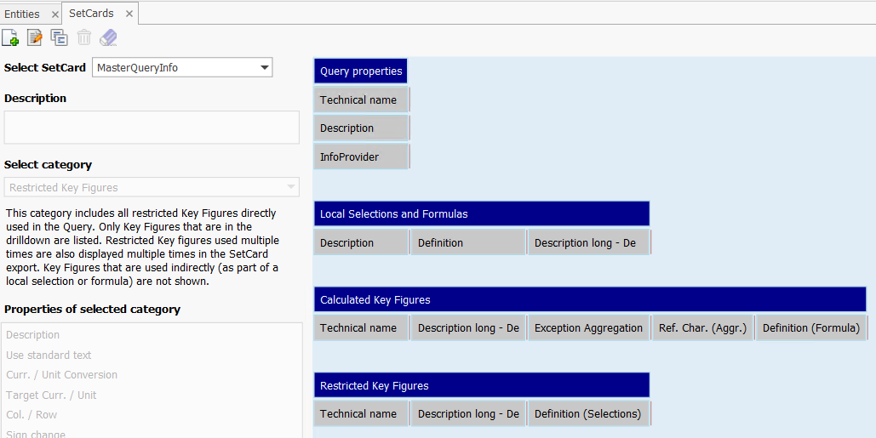

This analysis provided us with a list of all relevant queries for each composite provider. We used the Query SetCard Designer to create a central list of the definitions of all global and local calculated and restricted key figures. We created a SetCard in which we collected all the necessary information on the key figures used in the queries:

With the help of the Docu Performer (a tool in the suite), we were then able to export the queries to Excel according to our SetCard template. It was important to deactivate the “One file per documentation” setting so as not to create a separate Excel file for each query. The result was a consolidated overview of the key figures for each composite provider. We used this overview as a template for creating the master queries.

Planning of the Migration and Data Flow Analysis

At the beginning of the migration, we developed a structured wave plan that relied on a step-by-step conversion during the project duration, instead of a single big bang at the end. This approach allowed us to iteratively migrate reporting scenarios from SAP BW to SAP Datasphere, test them, and conduct user acceptance tests (UAT) with the departments.

This strategy offered several advantages:

- Risk minimization through step-by-step conversion

- Early stabilization of the new environment

- Continuous learning and optimization of the approach

- Flexibility to respond to challenges without disrupting the overall project

In a first step, the customer split the wave planning at the BW InfoArea level. Based on these specifications, we identified the affected composite providers using the entity lists in the Performer Suite. We created lists of these providers, enriched with additional attributes, and made them available to the customer. This allowed for a precise readjustment of the planning, since not all composite providers from an InfoArea could necessarily be migrated in the same wave due to dependencies.

Our task was to use the BW conversion tool as a shell conversion to migrate the data flows for each composite provider completely and smoothly. Although the conversion tool can determine dependencies itself using a scope analysis, it proved useful to manually spread the objects across several conversion tasks. The automatic scope analysis often returns very extensive object lists, which increases the risk of terminations in the case of complex dependencies. Furthermore, certain dependencies, such as lookups in ABAP transformations, are not automatically recognized.

Our solution was to manually define the scope for each conversion step to achieve better manageability and reduce migration complexity. Based on this approach, we developed the following prioritization for the migration:

- InfoProviders (InfoObjects, aDSOs, Composite Providers)

- Transfer of native DDIC objects via ABAPGit into the BW Bridge ABAP stack (for example, lookups on Z tables in transformations, swapping out routine coding into ABAP classes)

- Transformations

- DTPs and process chains

The DDIC objects had to be manually adapted and activated in the BW Bridge’s BTP ABAP cloud environment. This environment is more restrictive than ABAP Classic and only allows APIs released by SAP. Most existing ABAP developments therefore required significant refactoring.

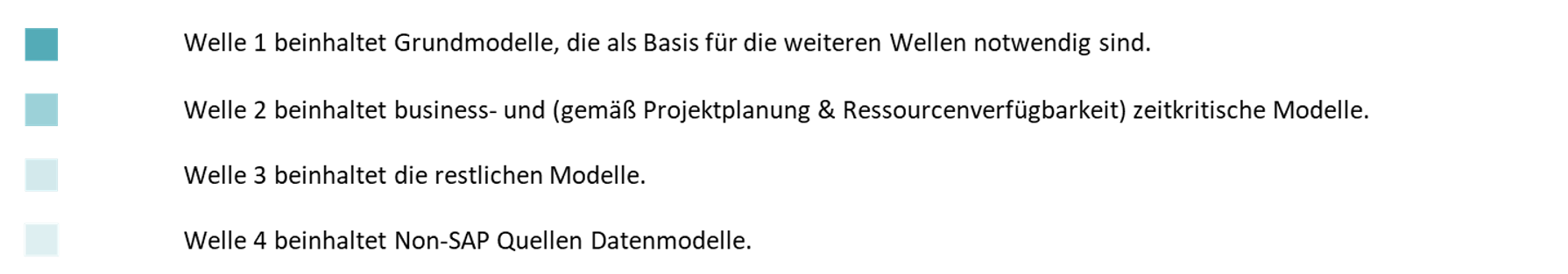

To create the scope lists, we needed a complete listing of all objects in the data flow, including transformations and DTPs, for each composite provider. We used the data flow analysis in the Performer Suite for this. This enabled us to assign all objects in the data flow to a scenario (in our case, a migration wave).

After that, we were able to select all objects in the entity list of the Performer Suite by selecting the corresponding scenario and output them as a list.

These lists served as work lists for the migration and were enriched with additional information (e.g. transformation used ABAP coding) and the migration status. This method allowed us to manage the objects to be migrated for each wave in a transparent and structured way.

Further Possible Uses in the Project

The Performer Suite proved to be a powerful tool in our migration project. Particularly valuable were the easy ways to quickly create lists of BW objects for various purposes.

- For ad hoc analysis, the suite (specifically, System Scout) provided quick insights into data flows that proved to be more detailed and user-friendly than the data flow view in the BW modeling tools.

- In cases where models were archived rather than migrated, we used the suite to quickly create complete model documentation.

- For development testing, the suite was particularly useful for quickly determining which InfoProviders were loaded via which process chains. This quick overview helped us to manage the development test process more efficiently.

Challenges and Lessons Learned During the Project

During the project, we did of course also encounter challenges and limitations although we had tool support. These were mainly restrictions on the part of SAP, but there were also possibilities that the Performer Suite does not (yet) offer.

Exporting the query set cards for 3.x query versions proved problematic, which meant that manual checks were necessary. For larger query selections, occasional crashes occurred, so that we had to spread the queries belonging to a composite provider across several export jobs. Consolidating the individually documented queries on a sheet required additional effort, which we managed by developing a macro. A more targeted analysis of all global and local key figures of a composite provider, for example as a system scout analysis, would be helpful in the future.

The major roadblock for our master-query approach was the extensive restrictions on entity import in Datasphere, which are listed in detail in an SAP Note (https://me.sap.com/notes/2932647). The import process was not transparent, as it was not possible to see which problems were occurring and requiring manual corrections. Some features prevented the import completely, others were skipped, resulting in incomplete key figure definitions. Especially critical were the restrictions on the supported formula operators. The limitation to only the basic operations (+, -, *, /) makes the entity import for queries almost unusable in practice. Real queries often have complexities that go far beyond these simple operations. In view of these problems, manual creation of the key figures in Datasphere ultimately proved to be more efficient. We focused the entity import on the composite providers, from which we then created the analytic models, and manually created the key figures in the analytics models. We used the lists of query set cards as a basis, which we had created for the master queries.

When creating the object lists for the migration waves, we encountered another challenge in the Performer Suite: the data flow analysis did not allow a direct assignment of the DTPs to the scenarios. We had to iteratively check the InfoProviders from the scenario in the entity view using the parent column to determine which DTPs belong to which InfoProvider and then subsequently assign them to the scenario. A simpler functionality for the holistic recording of all components of a data flow, including DTPs, without the need for workarounds, would have significantly simplified and speeded up the process.

Summary and Outlook

In summary, we can say that the use of Performer Suite in our project provided enormous value in reducing the manual effort and potential sources of error and in speeding up the entire migration process. The advantage of the project license was that we only had to purchase a temporary license for the specific use case. Our intention was not to integrate the Performer Suite into the daily IT routine, but to use it specifically for our purpose. Therefore, no expert knowledge in all facets of the tool was required.

We are aware that we could have possibly gained even more helpful information from the tool or could have continued working directly with the lists in the tool. Often, we fell back on the traditional method that we often see in customers and that we normally warn of: exporting to Excel, followed by creative further processing of the exported data. But sometimes you just have to be pragmatic! If it was easier for the project participants, who did not all have access to the tool, to work with object lists in the familiar environment of Excel, then that was absolutely fine with us.

In addition to the features already mentioned with regard to key figure and data flow analysis, we still see potential for future enhancements that could further increase the benefits of the Performer Suite in such projects:

- More ABAP-based information in the data flow analysis, especially in terms of ABAP Cloud language syntax. For example, it would be very helpful if the data flow analysis could show which transformations use ABAP coding that is not ABAP Cloud-compatible.

- It is great to see that bluetelligence is investing heavily in the expansion of its analyses in the direction of SAP Datasphere and SAP Analytics Cloud. In addition to views, Analytics Models and Task Chains will also be possible to analyze via the Performer Suite in the future, which will help in migration projects when comparing the old BW world with the new Datasphere world. Unfortunately, with our current version of the tool, we were only able to connect to the old BW system, but not to Datasphere or BW Bridge.

- Of course, it would be ideal if the Migration Booster, which is used as migration support for BW to BW/4HANA, could also be used for BW Bridge projects in the future. Compared to the SAP Conversion Tool, this enables a much more convenient and targeted migration and also allows the assignment of new naming conventions. It is yet to be seen whether SAP will provide the APIs for this on the BTP and whether the market for BW Bridge migrations is large enough to justify such an investment. But maybe it’s not too early to start making wishes – Christmas is coming soon 🙂

Finally, bluetelligence would like to point out that you can find out more about the tool used for this migration, the tool ‘System Scout’, here:

This article examines challenges and offers solutions in the area of ABAP programming, which serves as the central programming language in the SAP environment. The first part explains the most important aspects around ABAP, including its role in controlling and extending business processes in organizations and the variety of ABAP objects. Then, typical challenges in dealing with ABAP coding in large companies are described. Finally, the opportunity of using automation add-on tools in ABAP development is highlighted. A use case shows how these tools help to analyze and document code structures and dependencies more quickly and precisely.

1. Understanding ABAP Coding: Definition, Classification and Associated Objects

ABAP (Advanced Business Application Programming) is a programming language developed by SAP that is mainly used for the development of applications in the SAP environment. With SAP being the world’s leading company for business software, ABAP is one of the main languages when it comes to controlling and expanding business processes within applications.

Although ABAP was originally procedural, today it also supports object-oriented programming, similar to Java or C++. This makes it easier to implement modern software development principles.

In a large company, the number of ABAP objects (programs, classes, function modules, reports, modules, etc.) can run into tens of thousands. This includes:

- Reports: Programs that are used for retrieving, processing and displaying data (more information can be found here)

- Function Modules: Reusable modules in ABAP that encapsulate specific tasks and are called in programs (more information here)

Forms: User-defined forms such as invoices or delivery bills.

Enhancements: User exits, BAdIs (Business Add-Ins) and other mechanisms for customizing the SAP standard.

2. Mastering Challenges in ABAP coding

Development teams in large companies often struggle with non-transparent dependencies due to the abundance of ABAP coding. This results in errors, increased data model complexity, difficult maintainability and risks during system updates. Below, we’ll explain how these dependencies come about in the first place:

a) Avoid Missing or Insufficient Documentation

One of the most common causes of non-transparent dependencies is inadequate or non-existent documentation. In many projects, the focus is placed on the development of functionality, while the documentation aspect is neglected. However, it is essential to record for “posterity” how different programs, modules and data structures are linked together. Without clearly defined requirements, assigned responsibilities and the continuous updating of documentation, it becomes difficult to recognize dependencies, because: Reverse engineering across multiple system types is extremely time-consuming.

b) Managing Historically Evolved Code

SAP systems and their ABAP codes often exist for many years and are continuously adapted and extended. Over time, more and more user-defined functions, quickly implemented solutions, workarounds and enhancements are created that were originally intended to respond to short-term requirements. Old modules/programs continue to be used even though new solutions exist and changes are sometimes made without taking the overall system into account. As a result, these “evolved” structures are no longer clearly traceable, especially if different internal and external developers or teams have worked on the same programs over the years.

c) Consider Re-use and the Lack of Modularity

In ABAP development, global data and functions are often used that are integrated in many different programs. If developers use global classes, database tables or function modules without defining a clean modularization and clear interfaces, close dependencies arise between different parts of the system. Copying code instead of using common modules also leads to this. These dependencies are often difficult to recognize and are not always documented.

d) Avoid Unplanned and Uncoordinated Adjustments

In larger SAP installations, several developers or teams often work on different data structures and functions at the same time. If these adjustments are made without clear coordination or communication, no versioning is available or code review processes are missing, dependencies can arise that those involved are not aware of. These dependencies then remain opaque until they become apparent due to an error or problem.

e) Correctly Regulate the Use of Dynamic and Indirect Calls

In ABAP, it is possible to use dynamic program calls and indirect accesses to implement generic solutions (e.g. dynamic function calls or SELECTs to tables whose names are only determined at runtime). Metadata or tables are also sometimes used at runtime to control program sequences. Such techniques can be useful for developing flexible solutions, but they make the code less comprehensible. Without clear references to the dependent modules, it becomes more difficult to understand which programs or data structures are actually being used.

f) Mind the Close Connection Between User-defined and Standard SAP Components

“User exits”, “enhancements” or “modifications” to extend the SAP standard are commonplace. These user-defined developments (Z programs, enhancements) are often strongly linked to the SAP standard. When changes are made to the standard (e.g. through an SAP upgrade or a support package), unforeseen dependencies can arise because the programs are closely interlinked in a way that is not transparent. The close links between user-defined enhancements and the SAP standard can be difficult to understand.

g) Do Not Neglect Tests and Quality Controls

If the code is not sufficiently tested or checked, dependencies can be overlooked. Tests, especially unit and integration tests, often uncover hidden dependencies caused by changes in a module or program. If such tests do not take place or are inadequate, these dependencies remain undetected for a long time and changes are put into production with errors. This aspect is therefore due to a lack of quality assurance processes.

3. Tool Support: Automated Transparency in ABAP Coding

As described above, the challenges with ABAP coding are many and varied and involve a lot of effort in terms of documentation, coordination and quality assurance. SAP add-on tools can automate these processes: They document, can quickly search and adapt the code, facilitate testing and offer collaboration functions for both internal and external stakeholders.

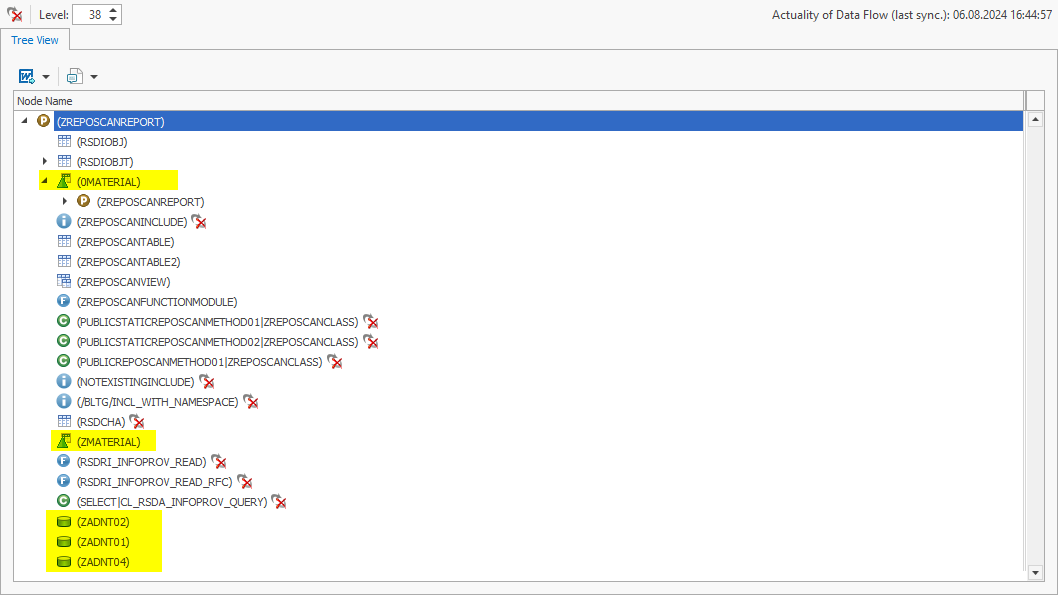

A specific use case will help you to visualize the use of such tools in everyday working life:

You are an ABAP developer. For some time now, you have been wondering why the record type for company code 2000 in table ACDOCA is always set to Planned. Unfortunately, you no longer have access to the original developers who implemented these processes because they have long since left the company. You are therefore faced with the challenge of determining the origin of the data in this table without any tips or documentation.

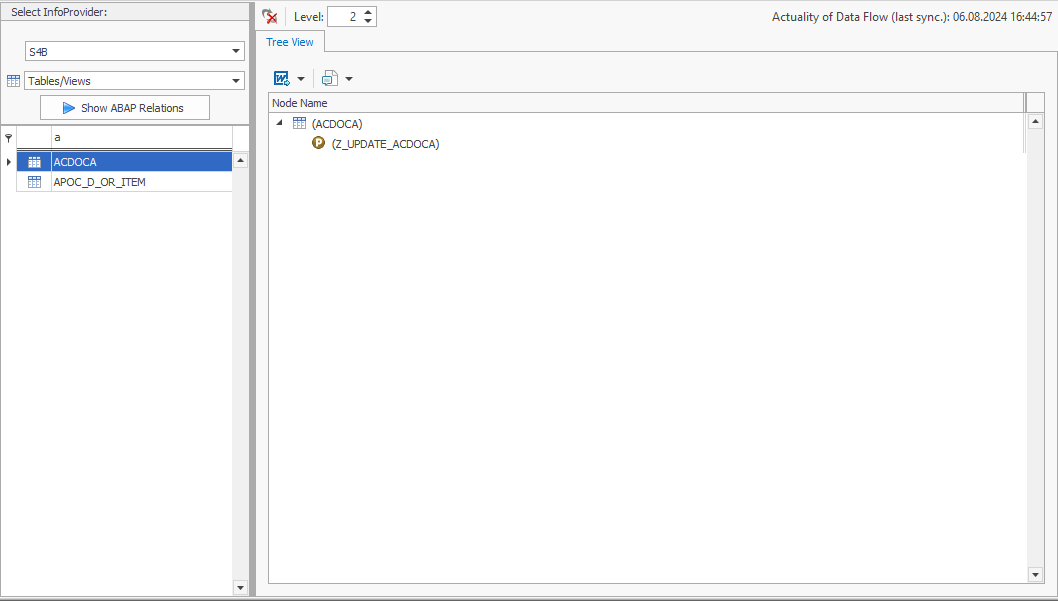

Instead of laboriously combing through the SAP BW backend and manually searching for the relevant ABAP objects, use an SAP add-on tool that can automatically search through metadata and put it into context. One such tool is our “System Scout” software, for example. Using its “ABAP Relations” function, you can analyze relationships between various ABAP objects and the ACDOCA table at the touch of a button. This is how you discover that the Z_UPDATE_ACDOCA program manipulates the ACDOCA table.

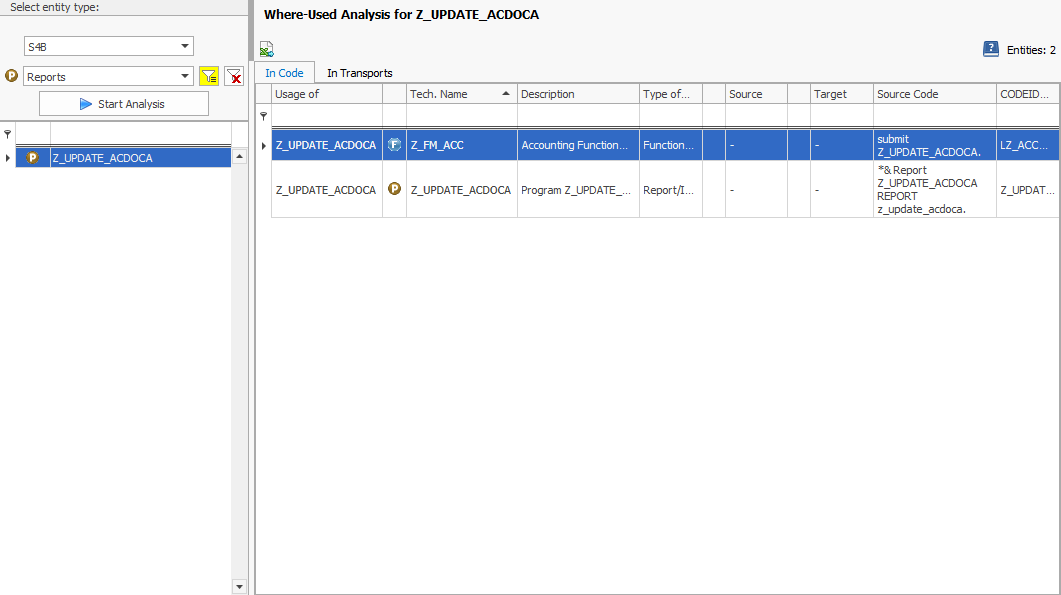

INSERT, MODIFY, UPDATEand DELETE But you require even more information: It is important for you to know which ABAP objects trigger the data manipulation. Here, too, the tool offers a helpful function: the where-used list. It carries out this analysis for the program Z_UPDATE_ACDOCA and finds out that this program is referenced in the function module Z_FM_ACC:

Knowing where to look for the logic responsible for the record type plan will save you valuable time if you have a full to-do list and no documentation.

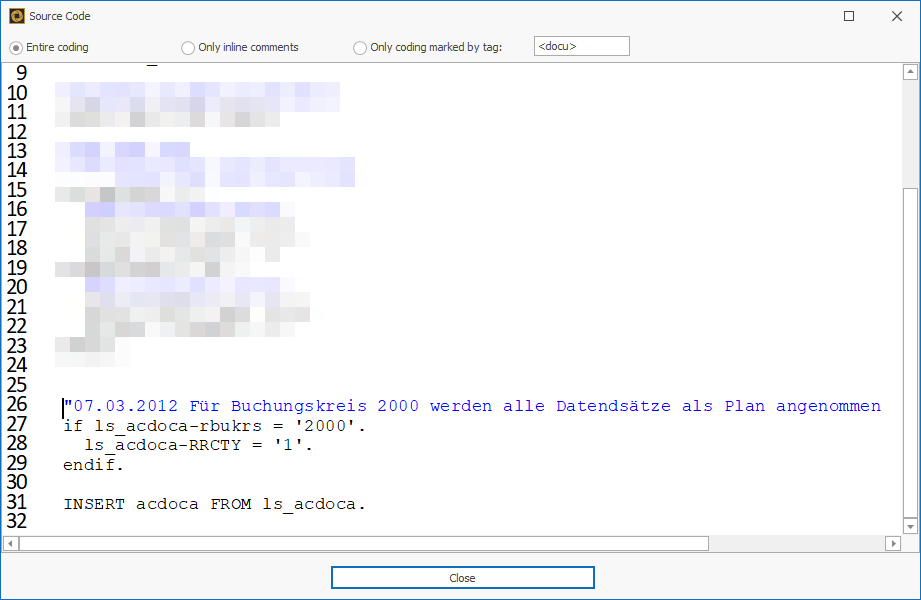

After the automated analysis of the source code, you also find out that a very old logic from 2012 always sets the record type for company code 2000 to planned.

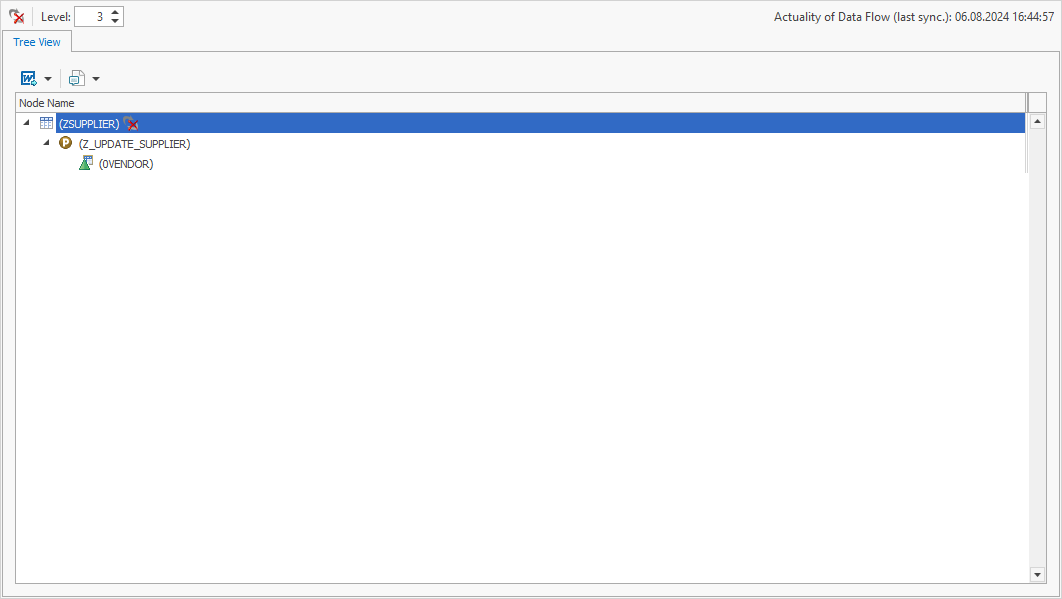

Incidentally, apart from the use case listed in the classic ABAP environment, the “ABAP Relations” function also supports you in the BW environment. If a lookup scan is carried out in a BW data flow, the identified objects can then also be analyzed with “ABAP Relations”:

For example, you can see that the table ZSUPPLIER is manipulated by a program – namely Z_UPDATE_SUPPLIER:

In addition, identified tables of BW objects are displayed directly as BW objects. This ensures a better understanding and enables further interactions and analyses.

The functions of the System Scouts tool, in particular “ABAP Relations” and the where-used list, offer considerable advantages to ABAP developers who need to quickly understand complex data flows:

They provide a quick overview of data manipulations and their origin, save time and increase the accuracy of analyses.

By clearly displaying the relationships between different ABAP objects and tables, these functions create transparency and make work considerably easier.

In addition, support in the BW environment makes the entire data flow analysis in SAP systems even more efficient and easier to understand.

P.S.: Nobody stays in their job forever – so don’t forget to document ABAP objects and their relationships for posterity. There is also an SAP add-on tool that automates this – the Docu Performer. It ensures that future ABAP developers no longer have to research, but can directly access detailed and up-to-date documentation.

Collaboration is a crucial driver of success, especially in complex domains like the Business Intelligence (BI) of a corporation. That’s because collaboration allows pooling the knowledge and skills of employees and work more efficiently together. That way, the BI team can respond to changes more quickly, act more flexibly, and ultimately positively influence their corporation’s outcome and competitiveness.

Now, what requires collaboration in a BI work context, exactly? The everyday tasks and decisions of any BI employee involve charts and reports. Most BI departments use high-end software solutions to make them accessible and offer a deeper insight. Yet, many face low use and acceptance among their teams. Collaborative BI boosts the acceptance of BI software and simultaneously changes how we handle data analysis and decision-making – by promoting teamwork and combining everyone’s knowledge. Here’s how:

1. Understanding Collaborative BI

In order to get a deeper understanding of Collaborative BI, let’s have a look at the ‘old’ way, before this trend: Traditional Business Intelligence follows a centralized, IT-driven model where a specialized team of analysts produces static, historical reports for decision-makers, often leading to extended turnaround times for fresh insights.

Now, on the other hand, Collaborative BI enables a wider array of users throughout the organization to interact with dynamic, real-time data using self-service tools that diminish reliance on IT. This method and its corresponding tools promote improved collaboration through functionalities such as report sharing, commenting, and annotating, while emphasizing both real-time and predictive analytics to facilitate proactive decision-making.

Traditional BI versus Collaborative BI

Key Objectives of Implementing Collaborative BI

The primary aim of Collaborative BI is to enhance problem-solving and decision-making processes. The following aspects are fundamental to achieving this overarching goal:

Decentralized Analysis

By engaging and empowering a diverse range of users with various roles, backgrounds, and skill sets, organizations can tap into a multitude of perspectives and collective intelligence. This approach helps in mitigating bottlenecks that are traditionally linked to centralized teams, thereby expediting the process of problem-solving. Engaging users from diverse departments and backgrounds fosters a rich array of viewpoints and insights, ultimately resulting in more thorough and inventive solutions.

Improved Dashboard & Report Design

Users with diverse roles, backgrounds, and skill levels require customized dashboards and reports that align with their specific needs. By fostering the sharing of ideas and knowledge among these users, organizations can create tailored dashboards and reports that effectively meet the varied requirements of their audience. Moreover, real-time access to data enables users to quickly identify and address issues as they arise. Interactive dashboards and reports allow users to drill down into data, uncovering root causes and patterns more quickly than with static reports.

Collaboration Tools & Services

Collaborative BI tools provide features such as commenting, sharing, and discussion threads facilitate immediate communication and collaboration among team members, allowing for faster consensus and action. Seamless real-time data sharing across the organization ensures that all relevant stakeholders have access to the same information, fostering a unified approach to decision making. Self-service BI tools enable users to generate their own reports and queries without waiting for IT support, accelerating the decision-making process.

2. Challenges in Implementing Collaborative BI

The implementation of Collaborative BI presents a unique set of challenges, which can differ based on the organization’s initial position and current circumstances. Overcoming these challenges will ensure the success of your Collaborative BI implementation.

- Tool Elasticity

- Data Privacy, Security and Data Ownership

- Metadata

- Data Integration

- Communication between Employees

Tool Elasticity

Tool elasticity, meaning the ability of BI tools to scale and adapt to varying user needs and workloads, poses a challenge for implementing collaborative BI as well: Ensuring scalability without performance degradation, integrating with existing systems, managing variable costs, and facilitating user adoption across all skill levels require significant effort. Additionally, data security concerns, especially with cloud-based solutions, performance optimization, maintaining consistent and reliable access, and balancing customization with stability complicate the process. These factors make it difficult for organizations to effectively implement and maintain elastic BI tools for collaborative efforts.

Data Privacy, Security & Data Ownership

Data privacy, security, and data ownership of course pose challenges when implementing collaborative BI: Handling sensitive information, managing authorized usage, ensuring compliance with regulations like GDPR and HIPAA, and managing the increased risk of data breaches is complex and critical. Additionally, implementing robust security measures and secure infrastructure require significant investment and expertise. Continuous user training and awareness programs are essential to minimize human errors that could compromise data security, further complicating the implementation of collaborative BI.

Metadata

Metadata is extremely helpful in the context of collaborative BI because it answers the questions of data origin, usage. In traditional BI, these questions are asked by business departments and answered by IT. In collaborative BI, business users find answers themselves. This, however, presents the challenge of ensuring data is correctly understood by less tech-savvy users and utilized across the organization – e.g. by comprehensive training. Additionally, metadata is only of use for correct analyses when it is maintained up-to-date – this involves a significant effort and constant documentation of data sources, definitions, structures, and usage. Discrepancies in metadata can lead to misinterpretations and inconsistencies, complicating data sharing and collaboration.

Data Integration

Data integration is particularly challenging and crucial for Collaborative BI: It involves consolidating different data sources with varying formats, structures, and quality levels into a unified system that all users can access and analyze. It is essential for enabling real-time, collaborative decision-making, but it requires sophisticated tools and processes for data extraction, transformation, and loading (ETL). Effective data integration also necessitates collaboration between IT and business units to align on data definitions and standards, a challenging but essential task to ensure that all users are working with the same accurate and consistent data.

Communication between Employees

Communication between employees is the heart of the whole matter of Collaborative BI – and it is a challenge itself: Due to the varying levels of (technical and business) expertise and understanding of data, differences in language, priorities, and perspectives, misunderstandings are bound to occur. They can lead to incorrect data interpretations, flawed analyses, and poor decision-making. Additionally, coordinating across departments and ensuring that everyone is aligned on BI objectives, processes, and tools necessitates continuous effort. Implementing these channels and fostering a culture of open communication requires ongoing commitment from leadership to break down silos and encourage active participation from all employees.

3. Recommendations for Improving Collaboration in Your Existing BI Landscape

Collaborative BI may pose its challenges, but with the following recommendations, you will eventually overcome and even outweigh them with its striking benefits:

- Self-Service & Data Visualization

- Data Quality & Data Governance

- Metadata Management & Data Cataloging

- Culture & Communication

Self-Service & Data Visualization

Self-service and data visualization are key when it comes to Collaborative BI – both aspects take the weight of the IT departments’ shoulders and make data accessible and understandable to all departments. They materialize in the form of

- intuitive, user-friendly tools that empower employees of all skill levels…

- …to access, analyze, and visualize data independently, fostering a data-driven culture across the organization

- comprehensive training to ensure the usage and efficiency of these tools

- Enhancing data visualization with customizability allows users to tailor dashboards to their specific needs and easily share findings with colleagues

- Ensuring robust data governance and real-time data access will further enhance the reliability and relevance of the insights generated.

Encouraging feedback and continuous improvement of these tools based on user experience helps to keep them aligned with the evolving needs of the organization.

Data Quality & Data Governance

A second big necessity in the process of introducing Collaborative BI is improving data quality. It can be achieved by implementing strong data governance practices:

Establishing

- standardized data entry protocols,

- regular data cleaning

- validation processes

- and clear data ownership that ensures accountability among all stakeholders

is essential to maintain high-quality data.

Advanced data management tools even automate error detection and correction and can significantly reduce inconsistencies. Ultimately, the culture of transparency with Collaborative BI fosters an open communication about data issues and collective efforts to resolve them, even if done manually.

Metadata Management & Data Cataloging

Finally, metadata management and data cataloging are an essential aspect to facilitate Collaborative BI. Ideally, you can even combine the two of them: Via APIs or dedicated metadata repositories, it is possible to include SAP or Power BI metadata into or next to your Data Catalog.

When implementing a data catalog, make sure that it

- serves as a centralized access to every BI employee (single point of truth)

- provides an intuitive interface and displays data in straight-forward way, so that users with varying backgrounds are able to comprehend the information

- includes metadata like the usage, source and lineage of data in order to efficiently answer questions that arise in the context of reporting

- displays up-to-date metadata in order to make the single point of truth really true – and thus, boost the usage of the Data Catalog

Continuous training and support for employees on the importance and use of metadata further enhance their ability to contribute to and benefit from the collaborative BI efforts, ultimately leading to more informed and effective decision-making.

Culture & Communication

Last, but not least, people make a company. In order to foster the new collaborative culture, management should:

- prioritize transparency and actively encourage the sharing of information and insights across all levels of the organization.

- Implement regular training sessions and workshops to enhance data literacy, ensuring all employees feel confident in their ability to contribute to BI initiatives

- Recognize and reward teamwork and collective problem-solving

- additionally, the physical aspect of creating dedicated collaboration spaces will streamline communication and data sharing, making it easier for teams to work together effectively

4. Collaborative BI Tools: Data Catalog meets Metadata Repository

As described above, user-friendly Data Catalogs and Metadata Repositories are two crucial tools to enhance Collaborative BI in your company. As a BI software development company of 16 years, bluetelligence has developed a combination of both: Our Data Catalog “Enterprise Glossary” includes business information as well as automatically synchronized metadata of all connected SAP and Power BI systems. It checks all the boxes of driving Collaborative BI by

- providing a central access to all key figures and reports in the company

- including information for all knowledge levels: business definitions as well as technical metadata (data source, data lineage, related key figures, etc) in an understandable way

- offering a user-friendly search and intuitive interface

- automatically syncing all connected SAP & Power BI systems for up-to-date information

- providing communication features for remarks and discussions

- being able to use standard templates or customize it to your needs entirely

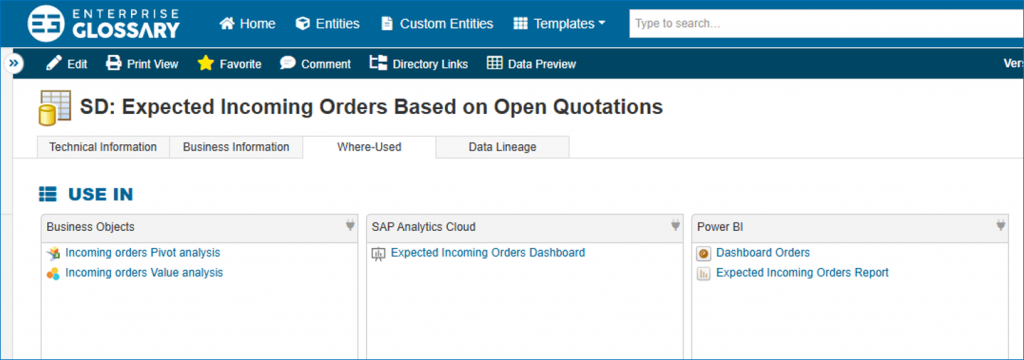

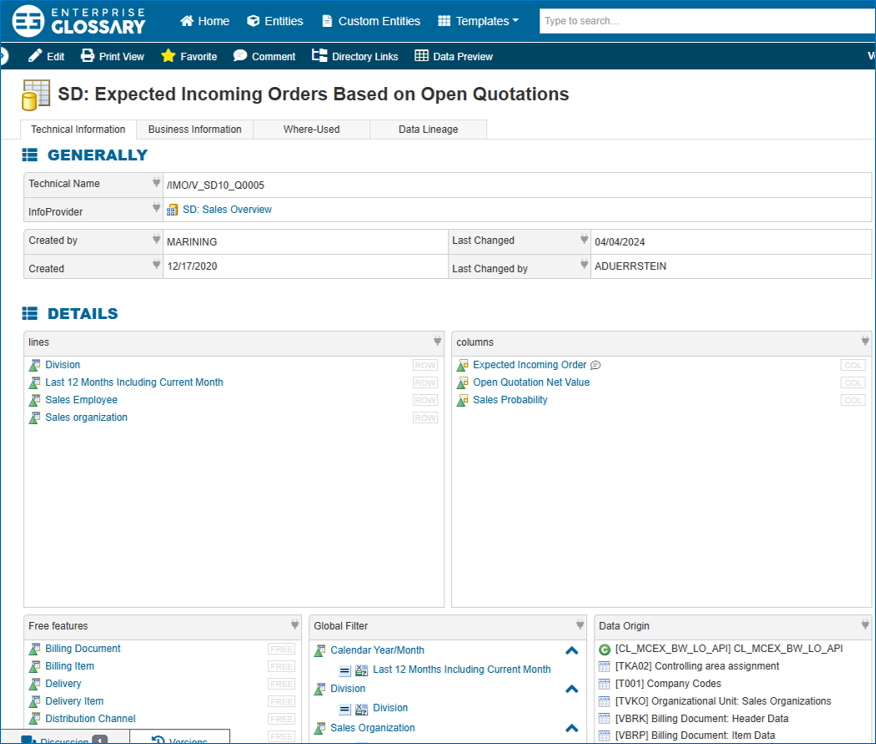

Glossary Entry Data Catalog

Data Lineage in the Data Catalog

Overall, bluetelligence empowers your company to leverage metadata more effectively, driving innovation and improving business outcomes through enhanced collaboration. Read more about our data catalog, the Enterprise Glossary, on www.enterprise-glossary.de/en.

Should you already utilize a Data Catalog but are looking to include SAP or Power BI metadata, our API serves this purpose exactly. In this case, head to www.bluetelligence.de/en/metadata-api.

Abstract — As software developers in the area of SAP Business & Analytics, we repeatedly encounter “time wasters”, i.e. everyday Business Intelligence processes that could be approached much more effiecient. This article deals with the dependency between business departments that work with dashboards and reports and IT, which in turn processes tickets when errors occur. As a solution, it discusses the BI Self Service concept, which can help to speed up these processes and thus save costs, time and nerves. Specifically, it involves utilizing data cataloging to provide business departments with insights into the metadata of their key figures and reports – thus relieving the burden on IT and making business processes more efficient.

The Use Case: Usually an Error in a Dashboard

We all know how it goes: Business dashboards are supposed to provide clarity – but if they don’t display the correct values or show error messages, the opposite is of course the case. This is particularly bad if the department notices the error shortly before a meeting in which the dashboard is needed.

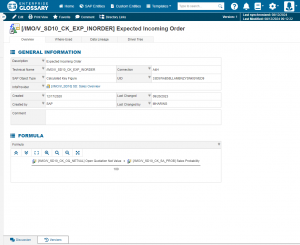

The error often stems from one of the key figures used in the SAP system – especially if the key figure is made up of several other key figures in the system. We call this a ‘nested key figure’. Another term that is often used is the ‘calculated key figure’.

Calculated Key Figures Have Their Pitfalls

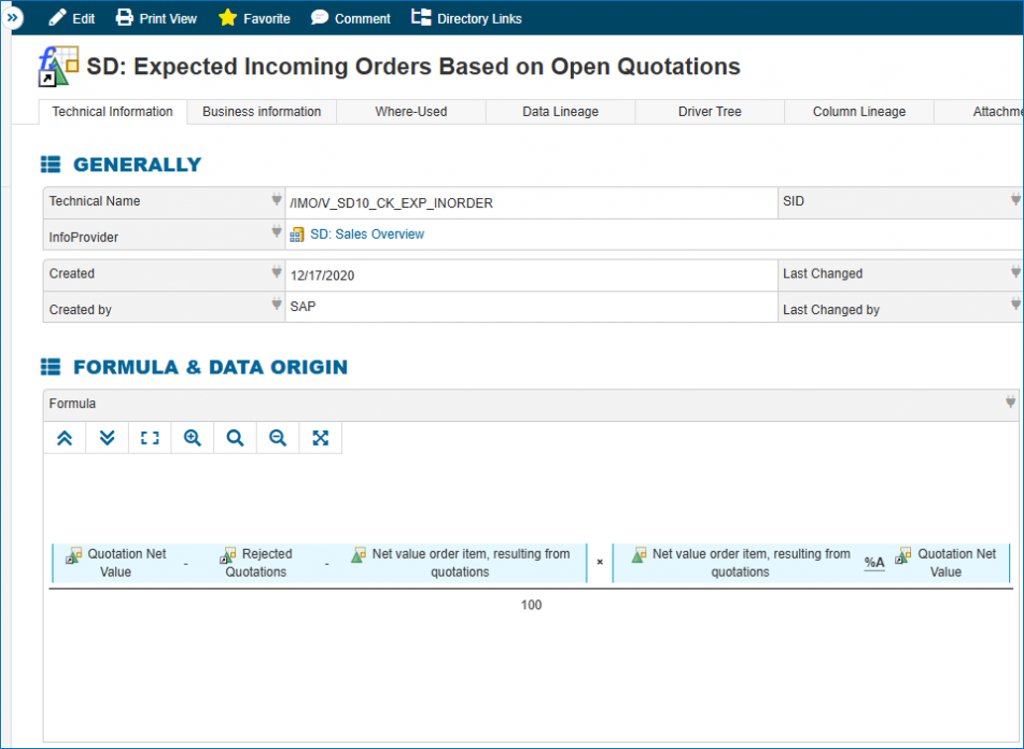

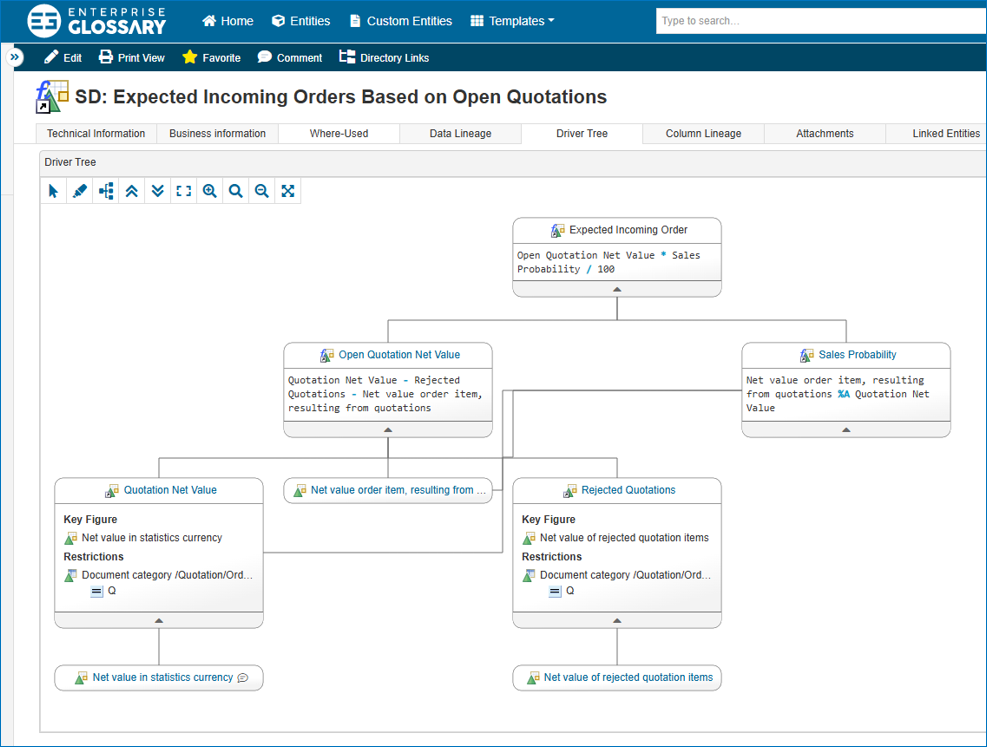

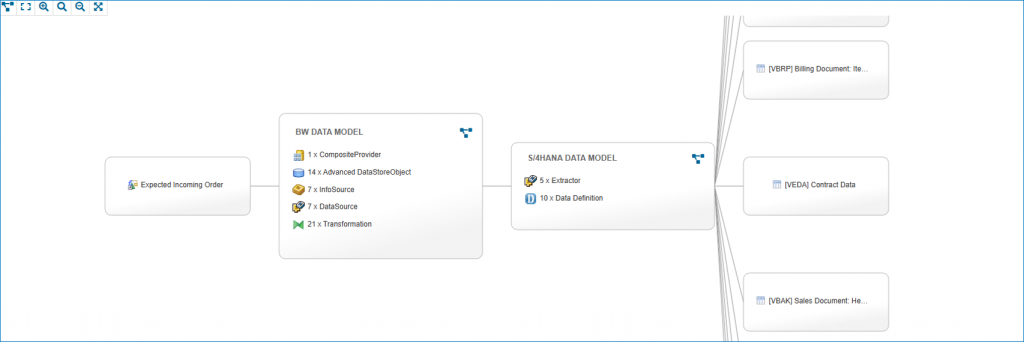

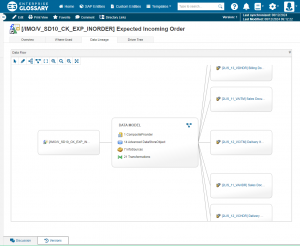

The key figure ‘Expected Incoming Orders’ in a sales dashboard can, for example, be made up of four to six other, equally nested key figures – for example, the sales probability, the open offers, and so on.

Finding out whether there is an error in one of the many key figures in the SAP system takes a hell of a lot of time.

What exactly is taking so long? As long as the department can only detect the error in the dashboard, but cannot see which other underlying key figures the incorrectly displayed key figure contains, it can only submit an unspecific support ticket to IT. The IT then have to search for errors and investigate the entire background of the key figure in the SAP system. And since IT is known to be swamped with tickets, the problem will not be solved in time before the meeting (or the next one).

As promised, this article is not only about problems, but also about solutions – and the solution in this case is BI Self-Service – more precisely, a Data Catalog. And a driver tree. Let us explain, why.

The Solution: SAP Metadata in Any Business Department's Data Catalog

In order to provide business departments with more empowerment with their dashboards and relieve IT of work in equal measure, it is advisable to use a data catalog that also provides an overview of SAP – the prerequisite, however, is that the information displayed is prepared in a way that is understandable for business departments.

Our Data Catalog, the Enterprise Glossary creates glossary entries for each key figure of synchronized SAP systems, in which both the technical definition and its calculation with all involved key figures are mapped. With the latest Enterprise Glossary function, the “driver tree”, the formula is even displayed graphically in a network graphic, providing an easy-to-understand overview of all levels of the nested key figure (see GIF).

This means that specialist departments without access to SAP backend can immediately see which key figures are involved in the dashboard. Since they can comprehend the mathematical calculation of the key figure, they will most likely already be able to tell IT the specific key figure that is displayed incorrectly in SAP. IT then will be able to rectify the problem in the backend in a much more targeted manner – and much more quickly. The Data Catalog thus acts as a link between business departments and IT – and makes life a little easier for everyone. Of course, the function is also useful in everyday life to understand how certain values in dashboards come about in the first place.

Conclusion: The use of a data catalog with a real-time connection to the SAP systems creates a self-service point that enables more efficient collaboration between specialist departments and IT – be it when searching for errors in dashboards, defining key figures or answering questions about existing reports.

Plastic waste in the world’s oceans is a huge problem. With his Ocean Cleanup Project, the young Dutchman Boyan Slat has set himself the task of actively tackling this problem. We are impressed by his determination and innovative strength and have been supporting the project since last year. So it’s high time we told you about it.

Five trillion pieces of plastic are currently floating in the ocean. However this figure can be quantified, it sounds frightening. In the long term, the pieces of plastic break down into microplastics and cause fatal damage to our ecosystem. In addition to marine life, humans are also affected along the food chain.

The question remains as to what to do about it. Collecting and transporting each piece individually would be neither affordable nor time-consuming. For many, however, simply giving up on the oceans is – fortunately – not an option.

Dutchman Boyan Slat has decided not to give in helplessly and take action instead. He made this decision at the age of just 17. Two years later, in 2013, he founded the Ocean Cleanup Project. With a team of up to 100 researchers and engineers, he has continued to tinker and develop an ingenious system.

The targeted technology is essentially based on plastic tubes arranged in a U-shape. Held to the seabed by weights, these tubes float on the sea surface and bundle the waste in the middle with the help of natural ocean currents, where it can be skimmed off after a while. The sea collects its own waste, so to speak.

You are currently viewing a placeholder content from YouTube. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

More InformationAfter the first prototype was successfully deployed, the first major marine clean-up mission with “System 001” – affectionately known as “Wilson” – was launched in 2018. It began with the Great Pacific Garbage Patch between Hawaii and California, the largest of the five large plastic waste fields. It is estimated to be 1.6 million square kilometers in size. The goal: to eliminate 50 percent of the Great Pacific Garbage Patch in just five years. To this end, continuous improvements have been made to the system since the start of the mission in order to make it more effective.

When we at bluetelligence first heard about the Ocean Cleanup Project, we were impressed by Boyan Slat’s tenacity, his passion and not least his visionary solution. It was therefore an easy decision to donate €5,000 to the Ocean Cleanup Project in spring 2018. Since then, we have also continued to support the project with €10 per employee per month.

Boyan Slat acts sustainably and wants to leave the world better than he found it. We at bluetelligence can identify with this. Long-term, resource-saving solutions and persistence in implementing visions are also important to us in product development. In addition, just like the well-known African proverb, we believe that many small people in many small places doing many small things can change the face of the world. This starts, for example, with the switch to glass bottles in the office kitchen and continues with electric company cars and donations to great projects like this one. Of course, we continue to be inspired in this respect and hope to inspire others to contribute to the preservation of our environment.

If you would also like to donate, you can do so directly on the Ocean Cleanup Project website. Please contact us if you have any questions.